Quality by Design, Validation, and PAT: operational, statistical, and engineering perspectives

Posted: 2 August 2008 | Ron Branning, Lynn Torbeck and Cliff Campbell | No comments yet

Dr. Janet Woodcock’s exhortation (ISPE Annual Meeting, 7 November 2005) that 21st century life science manufacturers should be ‘maximally efficient, agile and flexible’ and produce high quality drug products ‘without extensive regulatory oversight’ has been widely reported as a wake-up call to our industry.

Dr. Janet Woodcock’s exhortation (ISPE Annual Meeting, 7 November 2005) that 21st century life science manufacturers should be ‘maximally efficient, agile and flexible’ and produce high quality drug products ‘without extensive regulatory oversight’ has been widely reported as a wake-up call to our industry.

Dr. Janet Woodcock’s exhortation (ISPE Annual Meeting, 7 November 2005) that 21st century life science manufacturers should be ‘maximally efficient, agile and flexible’ and produce high quality drug products ‘without extensive regulatory oversight’ has been widely reported as a wake-up call to our industry.

This article responds to this challenge by defining specific common-sense strategies that pharmaceutical engineers and technologists can adopt to reach those standards. In particular, it shows how Quality by Design (QbD) concepts can be applied to process, data and equipment systems to generate self-validating outputs. It also addresses the implications of FDA’s Process Analytical Technology (PAT) guidance with the emphasis on Right First Time.

This article presents the opinions of three authors with extensive experience in the areas of life science manufacturing, statistics, and engineering. While each section has been written independently, the article is a collaborative effort that offers a shared conclusion.

Section 1: Quality by Design (QbD) in validation planning

The mention of ‘validation’ evokes a range of responses among life science professionals from anxiety and confusion to fear and wonder. The reason for this could originate from the FDA’s 1977 definition ‘validation is establishing documented evidence that a system will do what it purports to do.’ The breadth of interpretation of this seemingly simple statement and the numerous attempts over the years to clarify it has only made matters worse. Regulators, practitioners and consultants have all played a role in elevating validation to cult status.

The fact that the latest chapter in the continuing validation saga (FDA’s PAT initiative to emphasise science and technological innovation) has only added to the confusion shows that the industry has failed to grasp the essence of FDA’s 30-year-old validation message: manufacturers must understand their processes and control variability.

This section reviews current practice in a number of validation areas and suggests how our efforts can be streamlined to save time, effort and resources, while at the same time preserving and satisfying FDA’s core expectations. A sample project is shown to illustrate how a QbD-driven approach, at organisation level and in design and equipment construction, can simplify and add value to the validation process. A discussion on how designing quality into our workflows delivers an integrated validation framework that serves the industry and satisfies current and emerging regulatory expectations.

QbD at the organisational level

Validation calls almost as many departments home as there are interpretations of what the term itself really means. The problem is not so much which department ‘owns validation,’ but more the need for cross-functional interaction and cooperation to prioritise, execute and archive the validation workload. Departments with key roles within validation include:

- Process Development

- Engineering

- Manufacturing

- Quality

While any one of these can have overall responsibility for validation administration and oversight, each must be an active participant at the compilation and implementation level. Most discussions regarding what validation is and how to document it begin with ‘roles and responsibilities’ and end with ‘review and approval’ accountability. The discussions are endless and in many cases pointless. Validation is all too often the organisational reality of the paraphrase – ‘the validation debate has a thousand parents, validation is an orphan.’ Without such roles being explicitly defined, and in the absence of standard specifications and methodologies, the quality and value of our validation deliverables remains poor, often leading to avoidable and unnecessary duplication of testing. More significantly, it results in costly validation gaps that consume the scarce resources of industry and regulators alike.

Any manufacturing-related department can administer an effective validation system. Having a well defined system with clearly delineated roles and responsibilities is more important than its physical or organisational location. It is essential that the system defines where one department’s roles and responsibilities end and where another’s begins, from beginning to end of the validation life cycle. Specifically, it must provide for a double handshake across departmental boundaries to ensure that information flows in both directions and that the handoff is acknowledged by both the supplier and the recipient.

The following very simple example of a tank order shows how QbD-driven criteria can be incorporated into the validation workflow.

- A user specifies a tank, including its total and working volume, temperature control target and range, pH requirements, and mixing conditions

- Process Engineering reviews the user requirements, finds a certified designer and fabricator with appropriate experience and prepares a specification for a bid proposal

- Process Development reviews the user requirements, the process engineering specifications and the process technology data to ensure that the user and process engineer have defined the vessel appropriately

- Quality Assurance compares the documentation with the approved tank standards for the intended use and signs off on the user requirements and specifications package, returns it to engineering for design and notifies all the stakeholders of the approval.

QbD in construction

Tank fabrication is based on the availability of a formally approved and qualified design. Clear specifications that set out not only the type and materials, but also the functional and environmental constraints on its intended use, ensure that the fabricator delivers an asset that is fit for use and is Right First Time. If the specifications are unclear, a tank may have a total volume that was intended to be the working volume – 5,000L instead of 6,000L. An incomplete specification might result in a tank that has thermowells and probe positions in locations that are expedient in terms of fabrication and installation, but that fail to satisfy more important operational and maintenance requirements.

Putting it all together

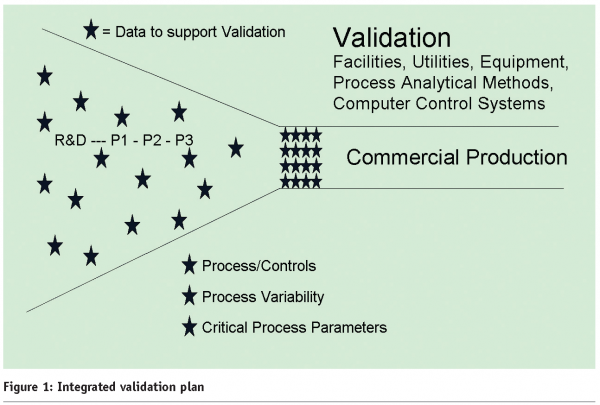

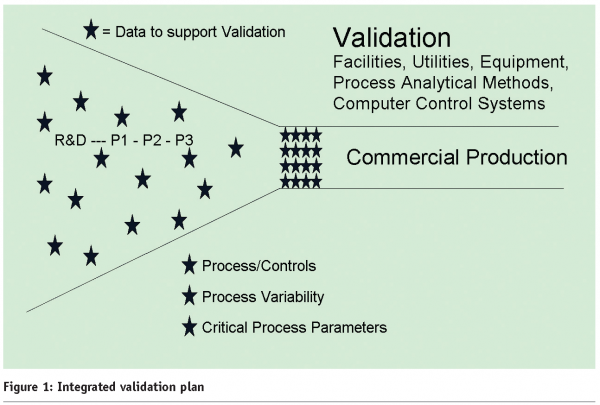

The data to support validation is collected throughout process development as each department applies its requirements. Specifications for the equipment type (open or closed vessel) and materials of construction (glass or stainless steel) are determined at the very beginning. The scale-up to clinical and ultimately commercial quantities in turn dictates facility and utility requirements as well as improved analytical tools and process/computer controls. The objective of R&D and Clinical Studies is to bring safe and effective products to market. The goal of the Process Development group is to take a small scale process with wide variability and bring it into a state of control for large scale commercial production.

At each step, valuable information is collected in lab notebooks and on analytical data sheets. All this data can be used to identify sources of variability and critical process parameters (time, temperature, pH, flow rate). The challenge for Validation is to glean all the process variability knowledge from the development process and apply it to controlled, statistically valid experiments that result in a robust, well monitored, well analysed and controlled process producing consistent product.

Developing and applying agreed industry standards for specification and validation would:

- yield reliable, self-validating outputs at each stage of the development process

- eliminate redundancy in testing and documentation

- eliminate ambiguity in specifications

- eliminate gaps and overlaps in departmental roles and responsibilities

- allow regulators to audit the standards rather than individual tests

- save companies time and resources

- adapt readily to innovation

Figure 1 shows the scattered variable data points in development that are brought into tighter alignment and control in commercial production.

Lynn Torbeck makes a persuasive argument in Section 2 of this article for using Design of Experiments (DOE) (conducting and analysing statistically designed tests to evaluate the factors that control the value of a parameter or group of parameters) to identify Critical Process Parameters (CPPs). The earlier that DOE can be used within process development, the quicker and less expensively data can be gathered before scale-up. This allows the development of standards (defined and adjusted by DoE-derived data) that can apply to all stages of production and streamline validation and documentation.

QbD and PAT

The FDA has consistently required that companies must have:

- a detailed understanding of their manufacturing processes, including the parameters necessary for control

- defined the critical process parameters that are responsible for process variability that impacts product quality

- set up an appropriate monitoring and control system to ensure a robust process and consistent, compliant product

Therefore, it is no coincidence that these three requirements are the intersection of validation and PAT. PAT can properly be understood as simply a quid pro quo between the FDA and industry. The FDA is offering regulatory flexibility to industry in exchange for substantive proof of process understanding, analysis and control allied with demonstrated product quality and compliance. The advantage is that they are offering to help smooth the regulatory approval pathway for innovations in process and analytical technology; a source of contention between industry and regulators for decades. PAT is lowering the regulatory approval bar that industry has been concerned was set too high for innovation.

The path forward for companies struggling with validation and confused about the application of PAT is threefold:

- Develop an integrated validation plan.

- Select the appropriate statistical tools to conduct and analyse the results of multivariate experiments.

- Enhance the engineering systems that initiate, support, and maintain the validated state of processes and associated infrastructure.

This approach primarily provides for a complete understanding of the process and only then addresses the implementation of PAT.

Integrated Validation Plan

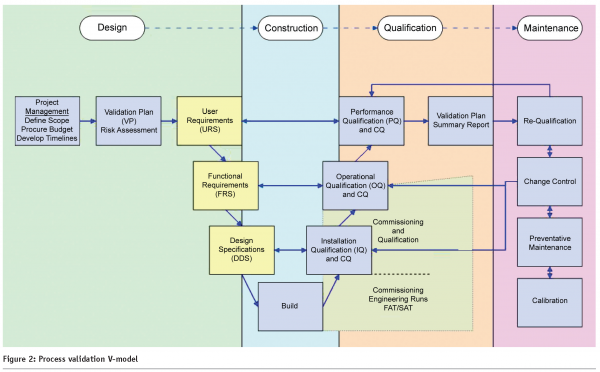

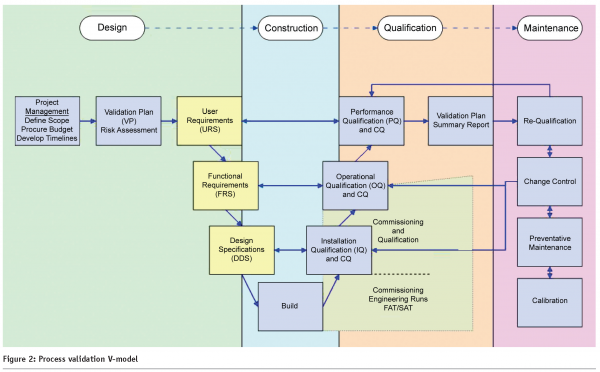

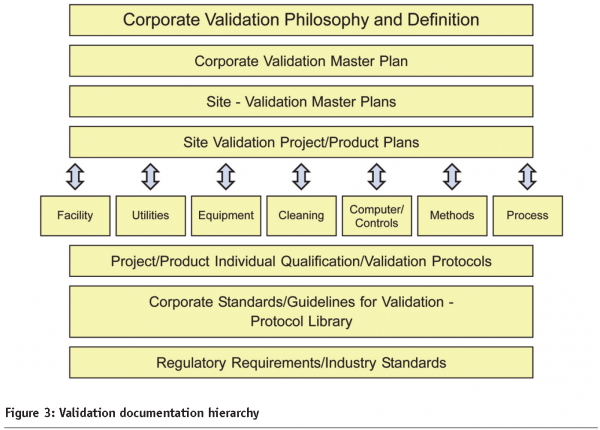

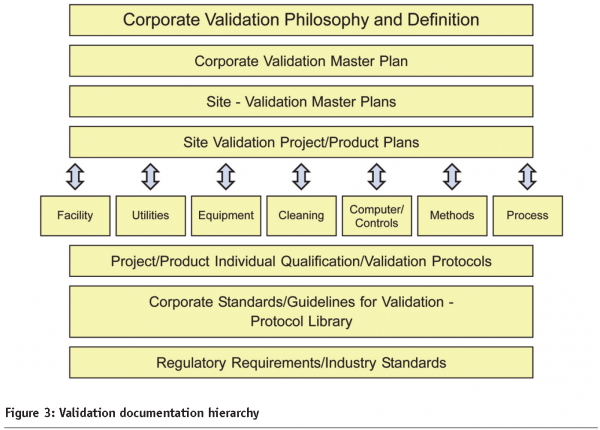

While validation execution would follow the industry standard V-Model shown in Figure 2, a fully integrated validation plan would include:

- A policy that includes a description of the company’s philosophy and validation approach, including QbD and risk assessment/risk management (ICH Q8 & Q9)1

- The master plan for validation (EU Annex 15)2

- Site validation master plans (and specific project/product plans) that link all development, production, and quality control data that support the current facility, utilities, equipment, cleaning, control systems, methods, and processes for site products, including individual protocols and summaries

- Validation standards (technical documents and a protocol template library) based on regulatory requirements and industry standards

- An organisational structure (Development, Engineering, Production, Quality, and Statistics) for the validation team, including roles, responsibilities, and handshakes

Figure 3 is also relevant, as it shows typical interrelationships within an integrated validation documentation hierarchy.

A validation program based on QbD provides the platform for PAT applications. Once the process is understood and the critical control points and critical process parameters have been defined, the determination can be made concerning PAT innovations.

How to begin a PAT assessment

- Select a process step or unit operation.

- Map that section of the process or operation, including the preceding and subsequent operations.

- Conduct a risk assessment on the selected process or operation.

- Collect and assess existing process or operation data – CPPs.

- Determine additional data required for better process understanding – CPPs.

Only then would you be ready to make a PAT innovation assessment.

Regulatory Awareness

QbD-driven validation requires heightened awareness of and responsiveness to emerging regulatory trends and initiatives. The FDA’s Pharmaceutical Quality Assessment System (PQAS), presented by Vibhakar Shah to the Agency’s Advisory Committee Pharmaceutical Science (ACPS)3 in October 2005, is a case in point.

From a manufacturing perspective, PQAS poses the following questions:

- Appropriateness of process design?

- Appropriateness of in-process test acceptance criteria and CPP ranges?

- Adequacy of relevant environmental controls, e.g., for moisture or oxygen sensitive formulation?

- Suitability/capability of control strategy?

- Strategy for continuous improvement within the design space?

If we’re not already doing so, we should start aligning our validation and ongoing metrics programs accordingly, regardless of our commitment to formal PAT. In Dr. Woodcock’s efficient, agile and flexible environment, the fact that corporate policies, plans and procedures need to be recast ought to be viewed as an opportunity rather than an obstacle. In any case, the adoption of 21st century guidance’s need not automatically apply to legacy products and systems.

Real Time Release (RTR), which is a logical extension of Parametric Release and no more contentious, is another aspect of PQAS which should be factored into our process maps. Again based on the ACPS presentation, the 21st century technology transfer and validation efforts should be designed to take into account the following expectations:

- Ability to evaluate and ensure acceptable quality of in-process and/or final product based on process data, which includes valid combination of –

– assessment of material attributes by direct and/or indirect process measurements

– assessment of critical process parameters and their effect on in-process material attributes

– process controls

- Combined process measurements and other test data generated during manufacturing can serve as the basis for RTR of the final product

- Thus, demonstrate that each batch conforms to established quality attributes

FDA’s Aseptic Processing Guide (2004)4 is another topical example that challenges organisational response mechanisms. In this case, the guide is explicit in several areas, yet a time-lag exists in translating these requirements into succinct, testable criteria with key commitments often diluted or lost in the translation. The following two examples, taken directly from the guide, illustrate the point.

Airlock

A small room with interlocked doors is constructed to maintain air pressure control between adjoining rooms (generally with different air cleanliness standards). The intent of an aseptic processing airlock is to preclude ingress of particulate matter and microorganism contamination from a lesser controlled area.

From an architectural, HVAC and metrology standpoint, there is enough here to be going on with in regard to risk-based compliance, and yet our system specifications/protocols are rarely so concise or focused.

Batch monitoring and control

Various in-process control parameters (e.g., container weight variation, fill weight, leakers, air pressure) provide information to monitor and facilitate ongoing process control. It is essential to monitor the microbial air quality. Samples should be taken according to a comprehensive sampling plan that provides data representative of the entire filling operation. Continuous monitoring of particles can provide valuable data relative to the control of a blow-fill-seal operation.

Again, the standard response is crude and untraceable. Check your own monitoring and control process to see if it explicitly itemises the following elements with associated instrumentation, units of measurement, and trend/archive frequencies predefined. If it does, you’re top of the class.

- Container weight

- Container weight variation

- Fill weight

- Leakers

- Air pressure

- Microbial air quality

This section has presented ways to reform, not reinvent, the validation process, taking account of the FDA’s plea for good science, expressed consistently since 1977. Economy and common sense should be inherent in the process, both of which confirm that validation should be embedded in workflows rather than treated as a just-too-late, after-the-fact activity. The validation response must be commensurate with risk; therefore, emphasis has been given to the process-understanding aspects of PAT, duly supported by statistical analysis and design of experiments, rather than the technology itself, sophisticated though it may be. Regarding the engineering/validation disconnect, the discussion has advocated the development of explicit QbD standards allied with mutual handshakes across the life cycle as the only logical solution to this contentious issue.

Section 2: Engineering statistics and chemometrics

Introduction

This section describes how engineering statistics and chemometrics (the application of system theory and applied statistics to the large volumes of data collected in chemical processes) are currently viewed in pharmaceutical quality and manufacturing and recommends how they should be regarded. Applied engineering statistics is discussed as an essential tool that all quality and manufacturing staff should use routinely. The role of engineering statistics is described under the following headings:

- Organisation

- Variability

- Specifications

- Statistical data analysis

- Control charts

- Sampling

Organisation

Current State

Typically, statisticians in the pharmaceutical industry work and operate in three specialised areas within the discipline of statistics:

- Clinical-trial statisticians are the most numerous and support human trials.

- Pre-clinical statisticians support research and development, including animal trials.

- Physical science statisticians, including Certified Quality Engineers, Certified Reliability Engineers, Six Sigma Green Belts, Black Belts and Master Black Belts, and external consultants, are the smallest group. They support quality control, quality assurance, validation, manufacturing, pharmaceutical technology, process engineering, process improvement, and Process Analytical Technology (PAT).5

Most companies have few statisticians working in these areas so most projects and project teams do not benefit from their expertise. Although some colleges now provide courses in chemometrics, the majority of chemists and engineers have not taken courses in applied engineering statistics or chemometrics, and this training has not been required for professional chemists and engineers. The attitude is often ‘if statistics were so important to success, my major professor would have required me to use it.’ Those who take college statistics courses are often frustrated to find that the course is too theoretical and seems to have little practical application.6

Desired State

“Statistical thinking will one day be as necessary for efficient citizenship as the ability to read and write.” H. G. Wells

In the desired state, the industry acknowledges the need for support specialists in engineering statistics and chemometrics, but also expects professionals to be familiar with basic applied statistics. Chemists and engineers should design and analyse their own data collection experiments with a consulting statistician reviewing the draft protocols and draft reports.

Variability

Past State

In 1978, the Code of Federal Regulations (21CFR211.110(a)) had this important statement:

‘To assure batch uniformity and integrity of drug products, written procedures shall be established and followed that describe the in-process controls, and tests or examinations to be conducted on appropriate samples of in-process materials of each batch. Such control procedures shall be established to monitor the output and to validate the performance of those manufacturing processes that may be responsible for causing variability in the characteristics of in-process material and drug product.’

Not many people read the above paragraph from the Good Manufacturing Practice (GMP) regulations and realise that the FDA was telling companies to reduce the variability of their manufacturing processes. Therefore, Ed Fry,7 while working at the FDA as Director, Division of Drug Quality Compliance, wrote this in 1985.

‘A set of specifications, limits, or ranges on desired characteristics is required. Then, the processes that cause that variability in those characteristics must be identified. Experiments are conducted (that is validation runs) to assure that factors that would cause variability are under control, and will result in an output that meets the specifications within the limits of the ranges that you had previously established.’

He continued, ‘What is it that has to be validated? Let me again cite what the GMP regulations say. The regulations require validation of those processes responsible for causing variabilities in characteristics of in-process materials or finished products. In some cases, it’s easy to identify what causes variability, but in other cases, it may not be so easy. However, the regulations imply that not everything that takes place in a pharmaceutical manufacturing plant causes variability. Therefore, some things don’t have to be validated. We never intended to require that everything [that] takes place in a manufacturing operation is subject to a validation study.’

It doesn’t get much clearer than that.

Current State

Variability is seldom considered an essential part of a validation study and when it is, it is considered ‘inherent’ in that nothing can be done about it. Unless the variability is wildly excessive, efforts are not made to reduce it.

Desired State

It is reasonable to assume that the 2004 PAT guidance was written to get companies back on track with the original intent of the 1978 GMPs, that is, one goal of validation being to reduce variation. The PAT guidance includes this statement.

‘A process is generally considered well understood when (1) all critical sources of variability are identified and explained; (2) variability is managed by the process; and (3) product quality attributes can be accurately and reliably predicted over the design space …’

Management should adopt the mantra, ‘where is the variability coming from and what specifically have you personally done today to eliminate it or reduce it?’

Specifications

For the discussion of specifications, too, it is useful to review the GMP regulations issued in 1978 before considering present and desired conditions.

Past State

In 1978, the Code of Federal Regulations (21CFR211.110(b)) stated, ‘Valid in-process specifications for such characteristics shall be consistent with drug product final specifications and shall be derived from previous acceptable process average and process variability estimates where possible and determined by the application of suitable statistical procedures where appropriate. Examination and testing of samples shall assure that the drug product and in-process materials conform to specifications.’

This seems clear enough. Use your past historical data and good engineering statistical practice to calculate the specification criteria.

Current State

How many current specification criteria have actually been determined using ‘previous acceptable process average and process variability estimates,’ (standard deviation) and ‘suitable statistical procedures?’ Yet, every day quality assurance accepts or rejects millions of dollars worth of material and product using specification criteria without supporting evidence and without verifying or justifying the validity of those criteria.

Desired State

Engineering professionals should use past historical data and good engineering statistical practice to calculate specification criteria. If historical data doesn’t exist, use statistically designed experiments (DoE) to collect the data and determine the design and response space and from those the appropriate specification criteria.

Statistical data analysis

Current State

All too often, raw data is presented without even the most elementary analysis. Further, a short term view is fostered when historical data is not considered. Current data presented without reference to previous data in context is usually very misleading.

Desired State

Every engineering professional should have the basic statistical skills to present data in tables and graphs with appropriate summary numbers. Every presentation and report should tell a simple story in a compelling way.

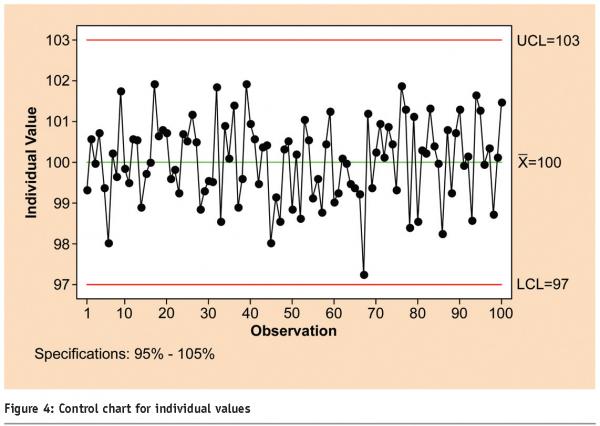

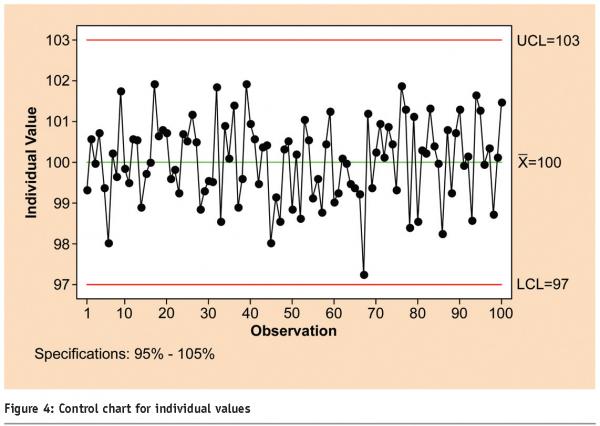

Control charts

Current State

The use of control charts seems to be spotty at best. While the GMPs refer to process control, there is no specific requirement that they be used. The regulation already quoted includes this:

‘To assure batch uniformity and integrity of drug products, written procedures shall be established and followed that describe the in-process controls, and tests, or examinations to be conducted on appropriate samples of in-process materials of each batch.’ 21CFR211.110(a)

The term ‘in-process controls’ can, of course, mean many things to many people, but it is not a stretch to include statistical control charts as part of the definition.

Desired State

The desired state is given with clarity in the PAT guidance as follows.

‘In a PAT environment, batch records should include scientific and procedural information indicative of high process quality and product conformance. For example, batch records could include a series of charts depicting acceptance ranges, confidence intervals, and distribution plots showing measurement results.’

Figure 4 is an example of a statistical control chart for individual values.

Sampling

Current State

Statistical sampling plans might be the weakest link in the chain of quality and manufacturing evaluation. Almost all companies use some form of statistical sampling plan to take samples for measurement. Most use ANSI/ASQ Z1.48 for attributes. A very few use ANSI/ASQ Z1.99 for variables. It also is rare that the company has actually trained the quality staff in the correct design, use and understanding of these sampling plans. Yet, the quality department makes decisions worth millions of dollars based on plans that are designed incorrectly and samples that might or might not truly represent the lots being sampled. The GMPs require that samples be representative, but once the sample has been taken, it will never be known whether the sample is representative. Only by watching the sample being taken can it be verified that it is truly representative.

Desired State

The GMPs in 21CFR211.25 require that all personnel be trained in the ‘particular operations that the employee performs…’ Because employees use sampling plans and take samples, they and their supervisors must be trained in the correct use of the sampling plans employed. This includes some understanding of the statistical principles underlying the sampling plans.

This section has focused on engineering statistical areas and applications that are used or not used in the pharmaceutical industry for quality and manufacturing. Happily, the use of engineering statistics has increased in the last 10 years or so. However, this progress has been spotty. Only management can implement the desired state. Hopefully, the PAT guidance has created a new awareness that will stimulate companies into action and all companies will use engineering statistics and chemometrics designs10 to their own benefit and that of the ultimate consumer, the patient.

Section 3: Quality by Design (QbD) in engineering processes

This section compares the current and desired states of pharmaceutical engineering, responding to Dr. Woodcock’s admonition and addressing some of the observations in the previous discussions. The underlying premise is that historically, our manufacturing processes and procedures have been discontinuous and that our engineering practices have adopted an equivalent mindset, resulting in a number of self-inflicted and costly disconnects. By contrast, the desired state treats pharmaceutical engineering as continuous and self-evident, characteristics that will be developed and discussed under the following headings:

- Models

- Methods

- Materials

- Manpower

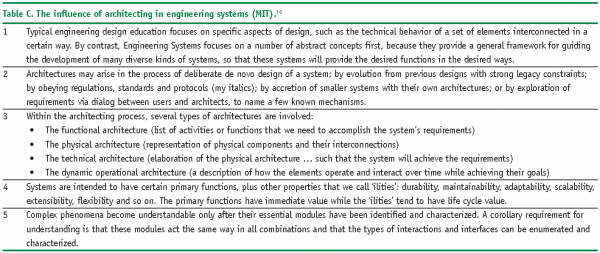

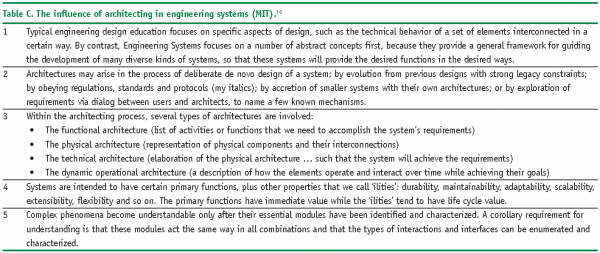

A basic example of applied QbD from an engineering point of view also is presented along with an MIT benchmark.

Models

Current State

Although our Good Automated Manufacturing Practices (GAMP)11 colleagues have been instrumental in delivering the ubiquitous V-Model, there is no formal engineering model to speak of within the current state. Several attempts have been made to extend the basic V to accommodate validation in general (See Figure 2) but what we really need is a scalable technical model that engineers can work with and remember.

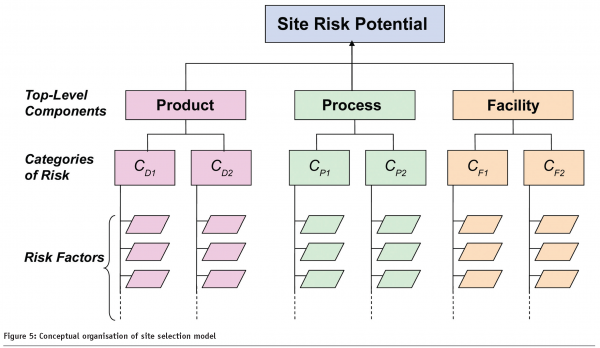

By comparison, the FDA’s Risk-Based Method for Prioritising cGMP Inspections12 (Sept 2004) contains a pilot model aimed at categorising and assessing Site Risk Potential (SRP) as shown in Figure 5; the objective being to prioritise and schedule inspections and to eliminate subjectivity from the equation. This has direct engineering applicability, and can assist in the delivery of a Right First Time and sustainable asset base.

Desired State

The desired state calls for an ISPE-driven model that enables both existing and emerging pharmaceutical technology (including PAT) to be identified, categorised and documented across the GEP/GMP life cycle. The model should be compatible with the current state initiatives mentioned above, and capable of handling major and minor projects alike with efficiency, agility and flexibility as prerequisites.

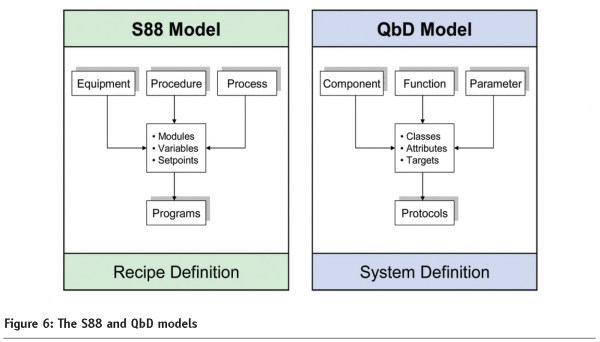

From an executable point of view, the ISA-S88 Batch Systems Standard13 offers a powerful prototype, geared toward the modular definition and execution of automated processes. Figure 6 shows the model alongside a proposed QbD counterpart. The similarities are striking: both exploit standard configurable elements; both assign set-points to variables as a function of risk; and both are self-documenting: programs being compiled in one case, protocols in the other. Most importantly, they are designed to be contemporaneous with minimum delay or interference between their input, processing, and output layers.

S88 has had a transformative impact on recipe definition and execution in our industry, and a properly developed QbD equivalent would have a similar effect on pharmaceutical engineering. Such a model would enable the traditional system life cycle to be transformed into a genuine continuum, readily understood and accepted by engineers, end users and regulators.

Note: For GAMP supporters, these vertical models insert a central class-laden spine into the vacant space of the traditional V, thereby connecting system requirements on the left to their associated protocols on the right with acceptance criteria being acquired en route.

Methods

Current State

Notwithstanding the wide range of design and scheduling tools that we consider commonplace, the current state engineering methodology is primarily manual and labour-intensive. Pharmaceutical engineers re-enter the same information into disconnected information systems many times in the course of their design, delivery and validation efforts. This is a self-inflicted source of error and non-compliance, cumulatively accounting for an alarming percentage of our capital expenditures.

Piping and Instrumentation Diagrams (P&IDs) remain the dominant representation of the total system, in spite of their obvious physical bias. Specifications and associated equipment listings are copied and pasted as expedient throughout the life cycle, from risk assessment through project management, commissioning and qualification. Maintenance and requalification are poorly served afterthoughts. Data exchange and analysis across applications is minimal, and change control is a tangled web. Quality Engineering standards do not exist to any real extent, and Validation doesn’t trust us so they do it all over again. This results in a proliferation of disorderly documentation, which, contrary to hearsay, adds no value and is of little interest to the 21st century regulator.

Desired State

The desired state methodology is based on an efficient, agile and flexible execution of the model outlined in the previous section. The following discussion examines each of the model’s three main layers.

Components, Functions, and Parameters

In the desired state, systems must be itemised with unique identifiers assigned to each component or element. The absence of explicit rules for tagging, within and across projects, remains the number one culprit in pharmaceutical engineering. Remember the maxim, ‘no entities without identity;’ and if you are playing the impact assessment game, be sure to include an appropriate qualifier (DI: Direct Impact, C: Critical, etc.).

When itemising your systems, do not restrict yourself to physical assets only. Instead, follow S88’s example and individuate your functions and parameters also; but do this in parallel rather than complicate or entangle your physical model. Such segmentation will be beneficial later on when you are attempting to generate specifications and protocols for different phases of your life cycle.

What is described here will be familiar to those engaged in Manufacturing Execution System (MES) implementations, where engineering and manufacturing Bills of Material (eBOMs and mBOMs) are needed at different times for different reasons. The same applies within QbD, where different representations of the systems and components at different stages (e.g., design, installation, operation, performance) are required.

Classes, Attributes, and Targets

By identifying your manufacturing subject matter, you can quickly establish a QbD class library, which in section 1 would include keywords such as tank, pH meter, elution rate, and in section 2, control chart, experiment, and so on. With such classes in place, follow FDA’s SRP example and define quality attributes based on experience and input from your Subject Matter Experts (SMEs). Be sure to define units of measurements for your quantitative attributes (°C or °F, psig or barg, litres/min or gals/hr, dd/mm/yy or mm/dd/yy).

Along with your S88 and FDA associates, you are now in a position to classify your systems and components, and all that remains is to assign the relevant target values to the predefined attributes. This is pharmaceutical engineering at its leanest, the target values of your variables being what distinguishes one regulated technical element from another (these values also will determine your DQ agenda).

Note: As a pharmaceutical engineer, your mix of education and experience should mean that you are qualified to make these determinations – and to recognise when a particular class is deficient and needs to be modified or replaced.

Protocols

A protocol is an instruction set, and so is a computer program, whether it has been S88-derived or otherwise. In both cases, classes serve the additional role of self-assembling the requisite documented output. When you instruct your Recipe Management System to initiate a Temperature Control Sequence, it knows which script to invoke, for the simple reason that the sequence is an instance of a class which is unequivocally connected to the script. Furthermore, it executes the sequence routinely, predictably and consistently. The same applies to GEP and GMP protocol generation within a QbD framework.

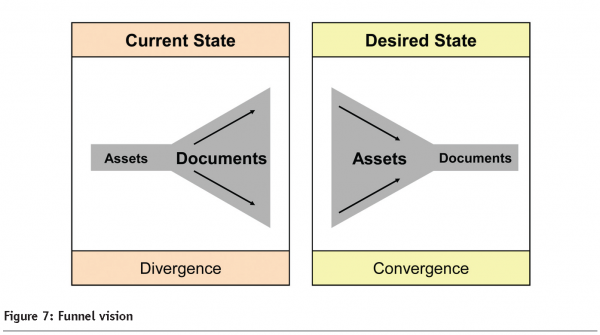

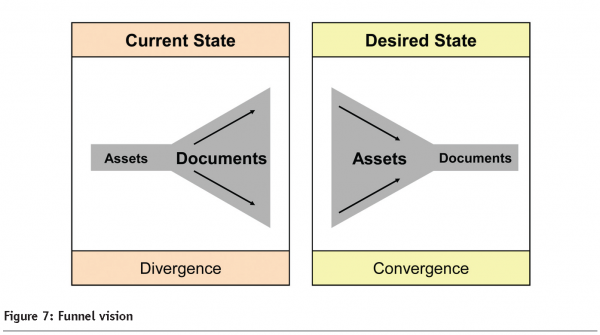

There is a point here worth reiterating: within the desired state methodology, itemisation and classification take primary position with validation treated as a consequential activity. This is the self-evident engineering which was referred to in the introduction, and as you can see in Figure 7, it delivers convergence rather than divergence.

There is another comparison between S88 and QbD which is important to note. Within S88, standard modules are rigorously qualified across their intended range (by vendors or end-users) before being put into active service. Individual deployments are then subject to verification rather than full-blown validation. There is no reason why this family-based approach cannot be applied to QbD with the same attendant benefits.

Materials

Current State

At input level, the current state uses material such as GMP regulations, ISPE/ICH guidances, and corporate policies/plans/procedures by the score. These are augmented by technical standards dealing with instrument calibration, piping design, and so on, although these items are rarely life science specific. From an output point of view, documents, drawings and databases are generated in abundance, but with little regard to connectivity, continuity or reusability.

Desired State

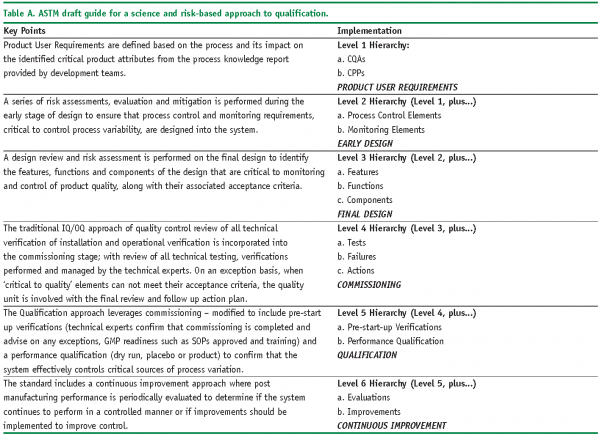

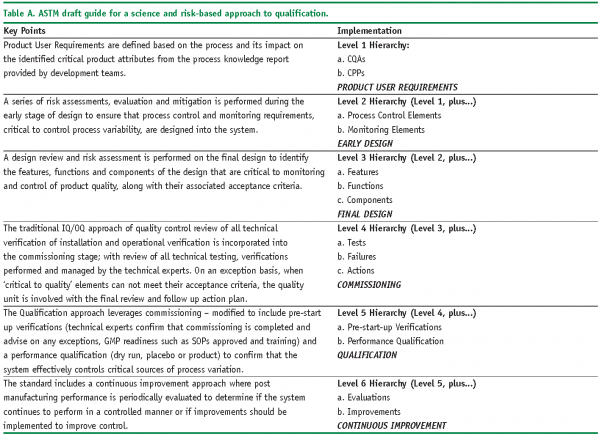

In the desired state, input material (i.e., regulations and user requirements) is translated into the relevant combination of classes, attributes and procedures, and executed using the type of methodology previously outlined. Because emerging guidances get the same treatment, they can be rapidly deployed on behalf of the systems to which they apply. From a QbD point of view, ASTM’s Standard Guide to a Science and Risk-Based Approach to Qualification of Biopharmaceutical and Pharmaceutical Manufacturing System14 (2006, Draft Under Development) is a topical example as summarised in Table A. In order to identify material deliverables associated with such documents, just read between the lines. This results in essential tasks and milestones being identified with a view to formal execution via your chosen model and method.

As with material inputs, QbD outputs should be equally informative and frugal. Resist the temptation to have as many formats and styles as there are days in the week, month, or year. A single format should be sufficient for system itemisation, another for specification and another for protocols, these documents being direct extensions of each other. Once again, the acid test is efficiency, agility, flexibility.

Note: For collateral evidence such as material certificates and user manuals, consider scanning and cross-referencing rather than putting up with the annoyance of managing and archiving hardcopy – often in triplicate or quadruplicate – for hardcopy’s sake.

Manpower

Current State

Currently, pharmaceutical engineering is organised as a series of independent specialties, each with its own skill-set, vocabulary and methodology. Such specialties include process, mechanical, metrology, automation and so on. Representatives from these departments ostensibly work together to deliver facilities and processes at optimal cost, quality and schedule. The educational process encourages this drive to specialisation, delivering engineers and technologists with specific rather than all-around capabilities.

Desired State

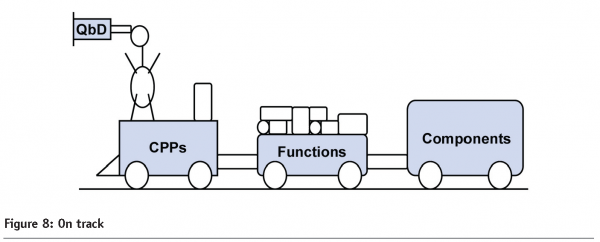

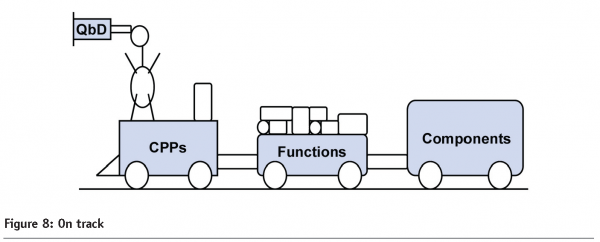

The desired state recognises the need for specialisation, but treats pharmaceutical engineering as a unified and integrated resource. In this scenario, engineers are multi-tasking with strong IT and analytical skills; ‘singular expertise being no longer sufficient in our workforce’.15 Such all-arounders will be leading members of our 21st century project teams, delivering the efficiency, agility, and flexibility that both industry and regulator require (See Figure 8).

To make this happen, manpower must facilitate dialog and data exchange across projects and disciplines. The establishment of a Data Dictionary Group has a major part to play here. The groups themselves need not be large, their mission being to develop and share standard semantics across applications, to define, implement and identify opportunities for leveraging documentation and data within the QbD life cycle.

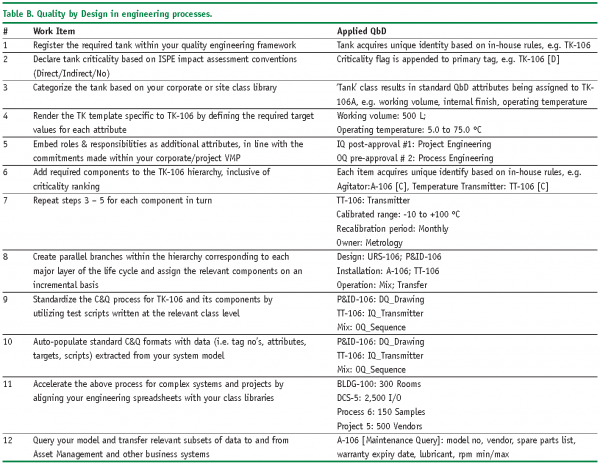

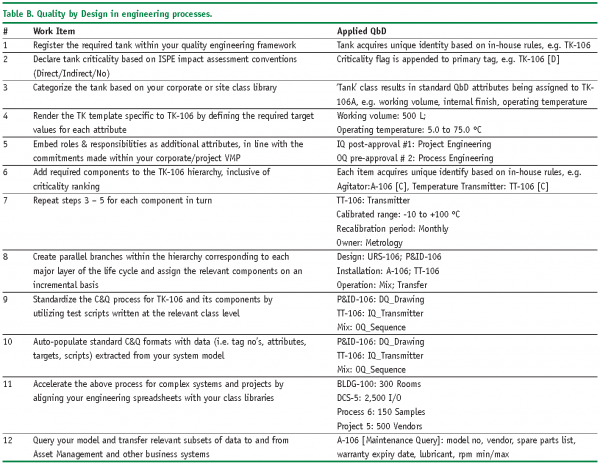

Table B illustrates the tank example from a quality engineering point of view, presented in tabular form.

The key point of the example is to emphasise the interconnected, incremental and Right First Time characteristics of applied QbD.

Summary

In this section, the use of system designing and modelling as prerequisites of Right First Time engineering is addressed. In particular, modularity and standardisation is presented, arguing that complex pharmaceutical systems and processes are best specified, explained, and validated by reducing them to their essential elements and properties, based on logic rather than literature. Connectivity and concurrency is discussed, suggesting that critical components, functions and parameters should coexist within a unified QbD framework and that these items should be self-documenting. Finally, it is imperative that 21st century pharmaceutical engineers are more self-assured, regulatory-aware and frugal than their predecessors when conceptualising and delivering facilities, systems, and processes of which they can be justifiably proud.

Conclusion

Dr. Janet Woodcock’s urging that we become ‘maximally efficient, agile and flexible’ has resonated with the three co-authors. Based on their collective experience, the authors’ propose that QbD can achieve that state, while at the same time isolating and eliminating unwanted pseudo-validation from the workplace. Applying fundamental QbD principles to the design of process, data and equipment systems delivers transparent and self-validating outputs sufficient to the needs of both industry and regulator. In this mode, validation is reinstated because of good science and good engineering rather than an isolated and expensive add-on as is too often the case currently.

In our conclusion, the keys to success are threefold:

- An integrated validation plan that provides a framework for defining and delivering compliant and robust manufacturing processes

- A statistical methodology to assess and reduce variability while identifying genuinely critical parameters and quality attributes and their associated ranges

- Self-evident engineering and life cycle management techniques that deliver a Right First Time and sustainable asset base

All three must be modular and responsive to the challenges and opportunities that emerging regulations and industry guidelines present. The intent of this article is to stimulate discussion and debate within ISPE, and subject to feedback, to conduct a follow-up workshop and establish a ‘desired state’ interest group.

References

- http://www.ich.org/cache/compo/276-254-1.html (accessed 3 August 2006).

- http://ec.europa.eu/enterprise/ pharmaceuticals/eudralex/vol-4/pdfs-en/v4an15.pdf (accessed 3 August 2006).

- www.fda.gov/OHRMS/DOCKETS/ ac/05/slides/2005-4187S1_09_Shah.ppt (accessed 3 August 2006).

- http://www.fda.gov/cber/gdlns/ steraseptic.pdf (accessed 3 August 2006).

- FDA, CDER, DVM. ORA, (2004). PAT – ‘A Framework for Innovative Pharmaceutical Development, Manufacturing, and Quality Assurance.’

- Personal communication with Mr. Jason Orloff.

- Fry, Edmund M., “The FDA’s Viewpoint,” Drug and Cosmetic Industry, 1985, Vol. 137, No. 1, pp. 46-51.

- ASQ, (2003). ANSI/ASQ Z1.4 – 2003 Sampling Procedures and Tables for Inspection by Attributes.

- ASQ, (2003). ANSI/ASQ Z1.9 – 2003 Sampling Procedures and Tables for Inspection by Variables for Percent Nonconforming.

- Deming, S. and Morgan, S., Experimental Design: A Chemometric Approach, New York, NY: Elsevier, 1987.

- http://www.ispe.org/gamp, (accessed 3 August 2006).

- http://www.fda.gov/cder/gmp/ gmp2004/risk_based.pdf, (accessed 3 August 2006).

- http://www.isa.org/Content/ NavigationMenu/Products_and_Services/Standards2/Standards.htm, (accessed 3 August 2006).

- http://www.astm.org/cgi-bin/SoftCart.exe/DATABASE.CART/WORKITEMS/WK9864.htm? E+mystore, (accessed 3 August 2006).

- Jon Tomson, ISPE Past Chairman, ‘FDA and ISPE Agents of Change,’ ISPE Ireland, (25 April 2006).

- http://esd.mit.edu/symposium/ pdfs/monograph/architecture-b.pdf, (accessed 3 August 2006).

© ISPE 2006, Reprinted from with permission from Pharmaceutical Engineering, November/December 2006, Vol. 26 No. 6, www.ISPE.org