High content screening and analysis

Posted: 20 March 2009 | Daniel Zicha, Head of Light Microscopy, Cancer Research UK and Olivier E. Pardo, Team Leader and Lecturer, Cellular Regulatory Networks Laboratory, Imperial College | No comments yet

Technological advances in robotised microscopy, liquid handling and image processing have enabled the emergence of high content screening where large numbers of specimens are automatically analysed. Here we overview the process discussing potential difficulties and solutions.

Technological advances in robotised microscopy, liquid handling and image processing have enabled the emergence of high content screening where large numbers of specimens are automatically analysed. Here we overview the process discussing potential difficulties and solutions.

Technological advances in robotised microscopy, liquid handling and image processing have enabled the emergence of high content screening where large numbers of specimens are automatically analysed. Here we overview the process discussing potential difficulties and solutions.

High content screening (HCS) enables the discovery of novel regulators of biological and physiological processes in an unbiased manner using light microscopy imaging.

It requires:

- A clearly identifiable phenotype with potential for regulation

- A collection of molecules, or library, to challenge this phenotype

- Access to automation both at the experimental and image acquisition levels

- The technical means to perform subsequent high content analysis (HCA)

What is a suitable phenotype for HCS?

Progress in imaging technology enables HCS to be performed not only using cell lines1,2 but also multicellular organisms (e.g. C. elegans)3. Consequently, the variety of phenotypes that can be studied is immense: speed of cell migration, directionality and invasiveness, cytoskeletal organisation, cell division but also tissue organisation or differentiation to quote but a few. However, the phenotype must possess certain qualities to be permissible for screening. It must either be an all-or-nothing trait (e.g. sexual differentiation in worm) or be quantifiable (e.g. cell speed in μm/s, cell length/width ratio, number of centrosomes). In the latter case, it is desirable that the phenotype studied presents with a sufficient range of modulation to enable significant changes to be observed. It is also necessary for the phenotype to be consistent under identical conditions. Therefore, careful consideration must be given to the choice of experimental model and, where possible, positive and negative controls should be identified and used as references.

Libraries

Three types of libraries are commonly used to perform HCS: chemical compounds, DNA-based and RNA-based libraries.

Pharmacological compounds libraries

Some collections contain well known molecules with a documented history of pharmacological and clinical activity, while others are composed of previously untested synthesised or extracted molecules. HCS using well-established compounds enables exploration of novel applications for these molecules or identification of factors, be it genetically related to the background of the experimental biological system or additional chemicals that can modulate their effects4. On the other hand, libraries containing previously untested molecules will help discover compounds with previously undocumented biological activities5 or with more efficient pharmacological properties than their established counterparts6. The latter approach is in general use in the pharmaceutical industry where chemical substitution of lead compounds generates a high number of secondary molecules that are then tested and substituted in an iterative process7. Chemical compound library screens present a number of advantages. They are highly amenable to automation and delivery into the cells does not require prior chemical complexing as for RNA or DNA molecules. Also, drug-based screenings directly identify pharmacologically active compounds rather than therapeutic targets, leading to faster practical translation of the results. However, subsequent analysis of data obtained using these collections can be complicated by several factors. First, pharmacological compounds generally possess more than one target and it is often unclear which of these is responsible for the observed effects. Moreover, active concentrations widely vary across a drug panel or experimental systems. Therefore, compounds are often tested as a dose-range leading to a considerable increase in data-set size. HCS using small compound libraries have been used to identify molecules targeting kinase activity8,9, study post-translational10 or cellular5 processes, to cite but a few.

RNA-based libraries

The RNA silencing technology, with the commercialisation of genome-wide siRNA (short interfering RNA) libraries, has enabled the investigation of biological processes in ways that were previously impossible. It led to the identification of proteins involved in a plethora of biological processes some of which were amenable to end-point or live cell imaging. Changes in cell morphology11,12, the actin and the tubulin cytoskeleton13,14 and cell motility15 upon RNA interference have been investigated using cell lines expressing fluorescently tagged proteins or fluorescent stains and high content imaging. siRNA libraries have several advantages. Each molecule tested will theoretically only silence a single protein allowing for direct correlation between a target and the observed phenotype. Also, the same concentration of siRNA can be used across cell types and regardless of the target. However, complexing with a chemical carrier or physical means (e.g. electroporation) are usually required to introduce siRNAs into most cell systems. These techniques can to some extent complicate automation and perturb the phenotype under observation. Moreover, the time required for target downregulation (usually 48 to 72 hours) can interfere with the phenotype studied either because of compensatory mechanisms (e.g. functional redundancy) or side effects (e.g. cell death). Furthermore, the effect of siRNAs is limited in time with effective target downregulation typically restricted to a maximum of five days. The same limitations apply to miRNA (microRNA) libraries. However, miRNAs, like pharmacological compounds silence more than one target at a time, complicating later data analysis. siRNA libraries can be purchased from various companies including Dharmacon and Qiagen. A number of pre-defined collections exist targeting various protein groups up to the full human or mouse genome. Additionally, custom libraries can be purchased targeting any desired list of proteins. All companies use proprietary algorithms to design silencing sequences that minimise the risk of off-target effects. However, there is no indication that a particular algorithm is superior and the choice of provider should rather be directed by the ability of the company to accommodate the customer’s desired library layout.

DNA-based libraries

Two types of vector DNA collections have been used for HCS: libraries coding for shRNAs (short hairpin RNAs) and those for protein expression2. shRNAs like siRNAs can target single proteins within a cell and will do so at the same vector concentration for all targets. However, shRNA provide the added advantage of mediating long-term (longer than five days) target downregulation and often possess selection markers or reporter genes to enrich cell populations and limit analysis to efficiently silenced cells. Retroviral or lentiviral shRNA libraries offer the choice of either transfecting or infecting the target cells. Finally, these libraries are economically advantageous as, unlike siRNA collections, they can be replenished following bacterial transformation. However, they suffer disadvantages. First, the efficacy of target downregulation is generally inferior to that obtained with siRNA owing to the reduced number of targeting sequences per cell. Also, when viruses are generated, the nature of the shRNA encoded can affect viral packaging, leading to variations in virus titre across the collection. The Netherland Kancer Institute (NKI) and the Hannon laboratory offer the two most used shRNA libraries. Later versions of these collections have been redesigned to minimise off-target effects.

Libraries for protein expression have been used to assess the effect of overexpressing wild-type or mutant (gain or loss of function) proteins on various biological processes. These libraries often complement shRNA or siRNA collections in that they allow one to perform the converse experiment to protein silencing. The expression of fluorescent fusion proteins further enables co-localisation of the molecules of interest with the compartment under investigation. As for shRNAs, these vectors require either complexing with transfection reagents or packaging of viral particles. However, unlike shRNA vectors, these have the added disadvantage of varied transgene expression efficiency depending on the size and/or the cellular stability of the encoded protein. Also, transgenes are generally expressed at supra-physiological concentrations that can result in artefactual effects. Origene offers expression libraries covering parts of the human genome. The NIH has produced a library of 1000 genes overexpressed in breast cancer, the BC-1000. No library covering the whole human genome exists at this time. However, cell line-specific cDNA libraries cloned in retroviral vectors exist16 and the vectors used enable the potential preparation of cDNA expression libraries from any cell line of interest.

Automated cell culture and robotisation

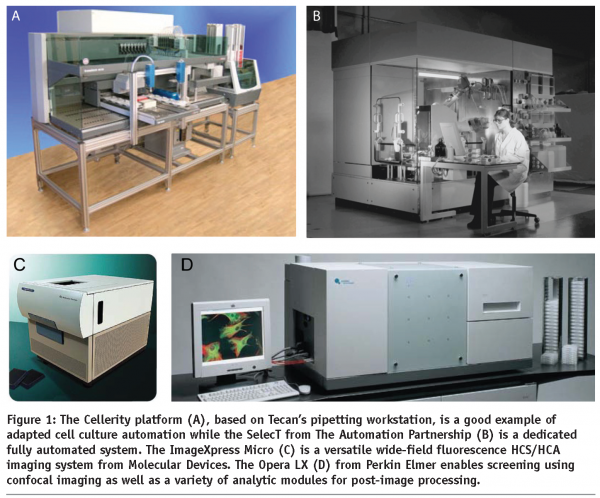

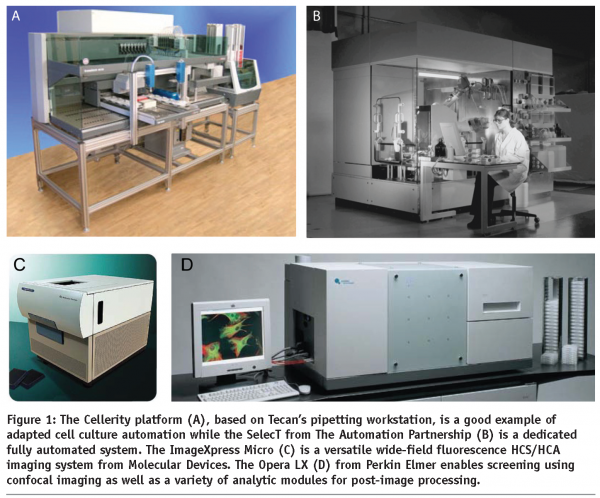

The cell culture needs of HCS can present a daunting task if manual intervention is the sole option. However, because of its repetitive nature, cell culture is an ideal candidate for automation. Indeed, robots can be programmed to perform all tasks required for cell propagation and maintenance such as flask labelling, cell delivery, trypsinisation, scraping and pipetting of required factors. Cell confluence can also be automatically assessed (e.g. using IncuCyte from Essen Instruments) to time these precisely across multiple cell lines. There is only a limited number of platforms for automated cell culture and they can be split into two categories: add-on systems for robotic platforms and dedicated cell culture robots (see Figure 1a and 1b). Although dedicated systems offer the highest reliability and ease of use, an adapted robotic platform is often a cheaper and more versatile option.

Several companies offer cell culture automation add-ons for existing robotic systems. For instance, Hamilton’s Cellhost system adapts onto the Microlab STAR pipetting workstation. A robotic hand handles cell culture plates in and out of fully integrated incubators and transfers them onto the pipetting station. Other systems include Cellerity, an automated cell culture solution for Tecan’s robotic liquid handling platform (see Figure 1a) while Beckman Coulter similarly adapted their Biomek FX workstation. A more open-plan system revolving around an industrial robotic arm is proposed by Cytogration. This option provides further plasticity as additional devices (other than those required for cell culture) can be linked to the system, provided they can be accessed by the robotic arm. Although these systems offer more flexibility, integration of the various components into a working environment is not necessarily trivial and programming might be required in addition to the operating software.

Unlike the above platforms, dedicated cell culture systems are enclosed environments. While they lack the versatility of open configurations, they are capable of dealing with larger cell culture loads. Also, their integrated software makes using these units more intuitive. However they are often bulkier and more costly. The MACCS Automated Cell Culture System from Matrical Biosciences can store up to 3200 flasks at any one time and accommodate simultaneously several cell culture formats, from microtiter plates to shaker flasks. The Thermo Scientific CGD WorkCell is another example of such stations while The Automation Partnership (TAP) proposes the widest range of systems, from open plan to tightly sealed units (see Figure 1b).

Several of the above robots can be used to automate cell transfection. Indeed most libraries are available in 96 or 384-well format and oligonucleotide collections can now be purchased pre-complexed with appropriate transfection reagents and only require cell addition17. Also, for end-point HCS, cell fixation and staining procedures can be performed automatically. Plug-in systems, rather than dedicated workstations, are generally more adapted to these multiple tasks and offer more user-based control.

Automation of acquisition

A number of automated HCS acquisition platforms are available for both low light level wide-field and confocal microscopy. Those are enclosed systems for multi-wavelengths acquisition of multi-well plates, laser auto-focusing and time-lapse imaging. Molecular Devices offers the ImageXpress Micro (see Figure 1c) and ImageXpress Ultra for high content wide-field and confocal imaging, respectively. Integrated environment control allows for time-lapse imaging while a robotic plate feeder enables continuous acquisition of end-point assays. The software driving both these systems, MetaXpress, offers a large choice of pre-loaded experimental layouts and analysis modules. Uniquely, this software enables custom designing of additional layouts and macro programming, a characteristic lacking in most its competitors. However, their image database solution is inconvenient for multi-user environments. The Cellomics ArrayScan VTI HCS Reader from Thermo Fisher is another wide-field high content imager that can be adapted with environment control. The attraction of this system is its relative ease of use at the software level. However, the software does not enable macro programming and customisation of the pre-loaded layouts is limited. Moreover, the ImageXpress Micro can acquire image stacks, a function not available on the Cellomics instrument. The Opera LX imager (see Figure 1d) from Perkin Elmer is, at present, the most widely used confocal screening system. It benefits from an open architecture software that allows user-created layouts while proposing a library of predefined cellular assays. Becton Dickinson’s Pathway High-Content Bioimagers can perform both wide-field and confocal imaging and can be adapted for robotic plate feeding. Although the above systems are more adapted to HCS, medium throughput acquisition can be performed using conventional low light level wide-field or confocal microscopes. For low light level wide-field microscopy, both Kinetic Imaging and Metamorph software packages allow the saving of x-y positions for acquisition of subsequent plates. Zeiss confocal microscopy software enables flexible macro programming and ready stack acquisition in multi-position mode using their Multi-Time Series module. Leica confocal solution allows HCS acquisition with their additional module Matrix. Although using these systems instead of dedicated platforms is more economically viable for laboratories that do not intend to engage in a long-term screening programs and already have conventional imaging systems, the layout of these units (e.g. condenser, environment control chambers) does not provide convenient access for robotic plate loading. Also, these units will typically have slower acquisition speeds. In this regard, it is worth mentioning that, where confocal imaging is required, spinning disc systems are recommended. Indeed, while multiple pinholes will increase fluorescent bleed-through in thick specimens, these models will nevertheless considerably shorten acquisition time (by a factor of eight to ten times over conventional confocal units).

Image processing and data analysis

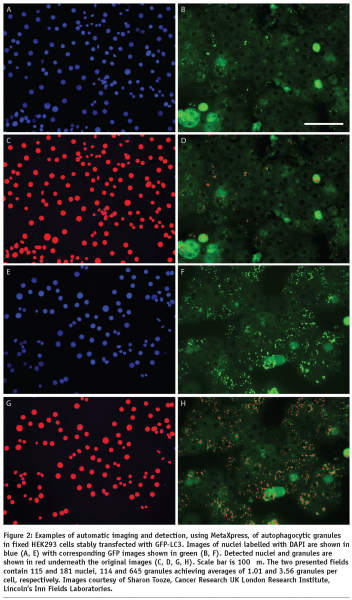

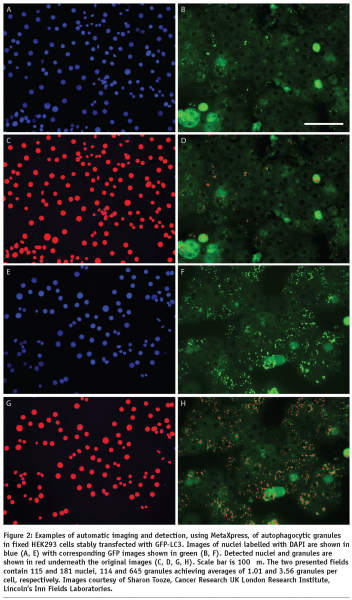

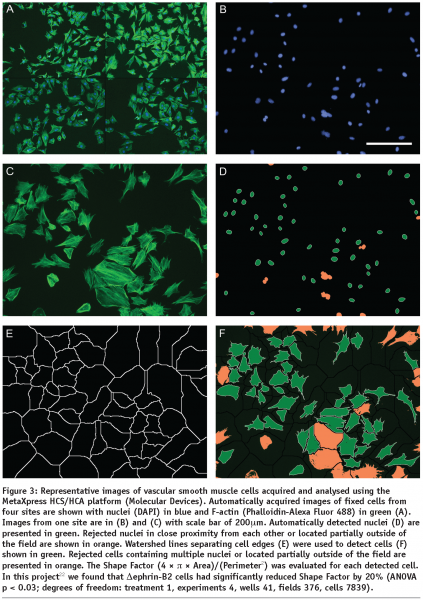

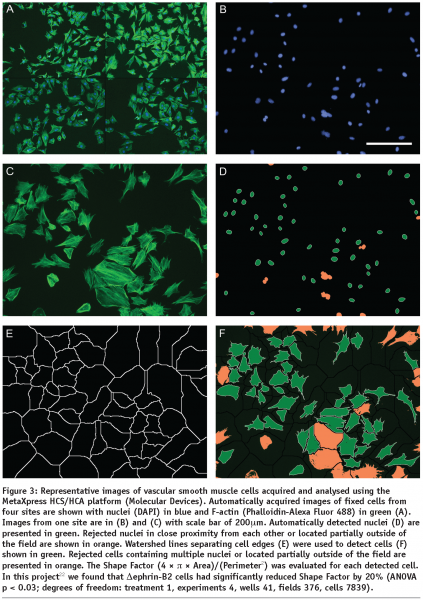

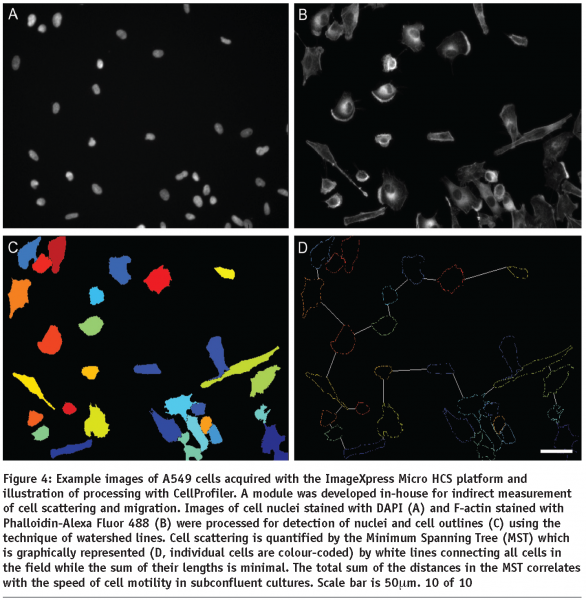

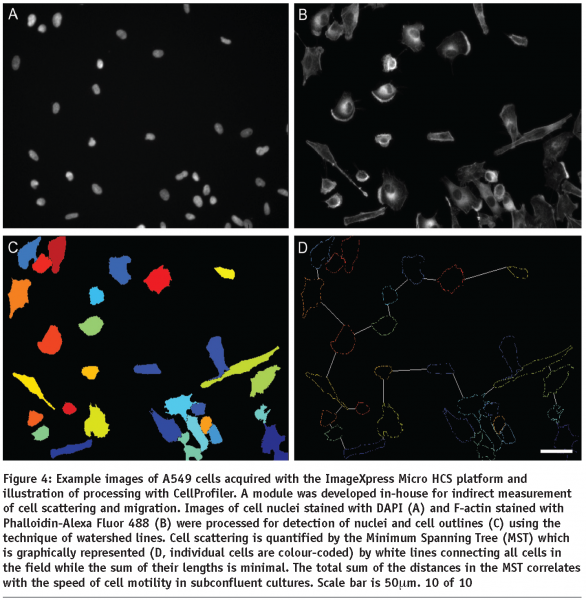

HCA relies on automatic image processing since interactive analysis cannot effectively deal with large sets of images and lacks objectivity. A large number of HCS, whether they are performed using fixed or life cell imaging, will incorporate cell nuclear labelling with a DNA dye or expression of a fluorescently labelled protein (e.g. GFP-histone). This is why HCA usually starts with detection of cell nuclei which is a relatively easy task for the automatic image processing since the nuclei are generally oval, similar in size and not touching. Even touching nuclei in freshly divided cells can be separated based on their shape. The next task for cell based assays is detection of cell outlines which is commonly addressed using watershed based algorithms. The identified cells are then characterised by morphometric parameters which reflect the cell outlines and distributions of labelled molecular species within the cell body in terms of localisation and/or texture. Examples of the morphometric parameters are spread area of cells, number of granules (see Figure 2) or Shape Factor (see Figure 3). Scattering of sparse cells in observation fields may be used to produce indirect measurements of cell migration18 (see Figure 4). In dynamic studies, individual cells need to be identified at different time points using tracking algorithms in order to produce parameters directly measuring their motility. Simple automatic tracking is based on the nearest cell position in the next frame and can produce satisfactory results. Additional morphometric parameters may be utilised to improve the reliability of cell identification. These approaches are only suitable for high contrast images with well defined cells. More complex images may benefit from methods based on image cross-correlation. Available software for HCA largely focuses on processing of 2D images. 3D sets of images can be converted to 2D e.g. by maximum projection or extended focus processing. Most commercially available image processing software packages with full 3D capability, such as Imaris (Bitplane) or Volocity (Improvision) have limited applicability to HCA. Dedicated HCA software can be purchased with or without the acquisition hardware e.g. from Molecular Devices, PerkinElmer or Thermo Fisher. Open-source solutions CellProfiler and CellProfiler Analyst (www.cellprofiler.org) are available from the Broad Institute19,20. The image processing is obviously computationally demanding and therefore it is beneficial to use computer clusters to speed up the process. The speed of analysis will approximately be a linear function of the number of processors within the cluster. Incidentally, the CellProfiler software comes ready for use with computer clusters.

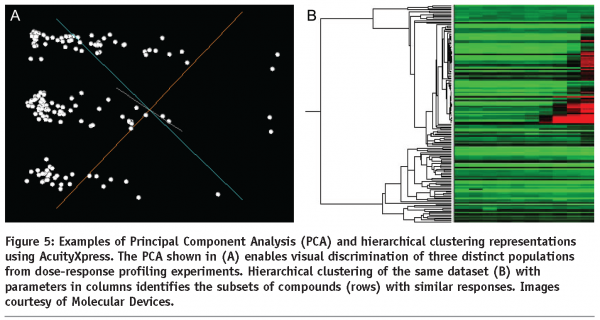

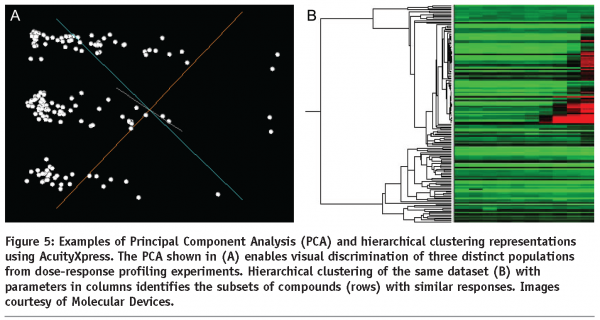

Morphometric parameters of individual cells are utilised in subsequent data analysis. Comprehensive overviews can be initially generated using scatter plots and heat maps. This is particularly useful for detection of potential problems such as edge effects with multi-well plates which may be generated by difficulties with liquid handling or variations of CO2 diffusion under the lid. The ultimate aim of HCA is identification of a subset of experimental treatments (e.g. chemical compounds or siRNAs) with particular properties (e.g. inducing large spread area of cells or high number of granules per cell). This is generally a classification problem. Taking into account just one quantitative parameter, the classification can be based on a threshold separating treatments achieving higher or lower values. With multiple parameters, the task is more complex and can be addressed automatically using for example Principal Component Analysis (see Figure 5) or machine learning with user provided examples characterising the desired classes of treatments. In all these cases, it is essential that parameters containing relevant information are chosen for the processing. The choice about treatments belonging to a certain class is best based on a valid statistical test (for example ANOVA with Benjamini-Hochberg correction for false discovery rate with multiple tests21 can be applied) and this is why a reasonable number of replicas should be used. Usually more than three replicas are difficult for extensive screens. With low numbers of replicas, robust statistical tests may be too stringent. In this instance a more relaxed approach can be adopted in the analysis of the primary screen, providing a larger subset for a subsequent secondary screen. The latter can then be performed with increased number of replicas allowing a more robust statistical analysis.

Conclusion

HSC and HCA technology has already proved useful and will become more important in future. It will extend the current approaches to novel experimental treatments and biological systems. More importantly however, HCS will be instrumental in addressing the immense complexity of analysing simultaneous multiple experimental treatments. Such approaches will help unravel the relevant biological mechanisms (cause-effect consequences of treatments with chemical compounds or modulation of protein expression levels) in the context of variable genetic backgrounds (circumstantial patterns of protein expression), and pave the way for individualised therapy.

Acknowledgments

We would like to thank Catherine Cowell, Cancer Research UK London Research Institute, Lincoln’s Inn Fields Laboratories, for constructive comments to the article.

References

- Neumann, B., Held, M., Liebel, U., Erfle, H., Rogers, P., Pepperkok, R., and Ellenberg, J. (2006). High-throughput RNAi screening by time-lapse imaging of live human cells. Nature methods 3, 385-90.

- Naffar-Abu-Amara, S., Shay, T., Galun, M., Cohen, N., Isakoff, S. J., Kam, Z., and Geiger, B. (2008). Identification of novel pro-migratory, cancer-associated genes using quantitative, microscopy-based screening. PLoS ONE 3, e1457.

- Crane, M. M., Chung, K., and Lu, H. (2009). Computer-enhanced high-throughput genetic screens of C. elegans in a microfluidic system. Lab on a chip 9, 38-40.

- Yip, K. W., Mao, X., Au, P. Y., Hedley, D. W., Chow, S., Dalili, S., Mocanu, J. D., Bastianutto, C., Schimmer, A., and Liu, F. F. (2006). Benzethonium chloride: a novel anticancer agent identified by using a cell-based small-molecule screen. Clin Cancer Res 12, 5557-69.

- Rebacz, B., Larsen, T. O., Clausen, M. H., Ronnest, M. H., Loffler, H., Ho, A. D., and Kramer, A. (2007). Identification of griseofulvin as an inhibitor of centrosomal clustering in a phenotype-based screen. Cancer research 67, 6342-50.

- Denner, P., Schmalowsky, J., and Prechtl, S. (2008). High-content analysis in preclinical drug discovery. Combinatorial chemistry & high throughput screening 11, 216-30.

- Minguez, J. M., Giuliano, K. A., Balachandran, R., Madiraju, C., Curran, D. P., and Day, B. W. (2002). Synthesis and high content cell-based profiling of simplified analogues of the microtubule stabilizer (+)-discodermolide. Molecular cancer therapeutics 1, 1305-13.

- Gasparri, F., Sola, F., Bandiera, T., Moll, J., and Galvani, A. (2008). High-content analysis of kinase activity in cells. Combinatorial chemistry & high throughput screening 11, 523-36.

- Heydorn, A., Lundholt, B. K., Praestegaard, M., and Pagliaro, L. (2006). Protein translocation assays: key tools for accessing new biological information with high-throughput microscopy. Methods in enzymology 414, 513-30.

- Simonen, M., Ibig-Rehm, Y., Hofmann, G., Zimmermann, J., Albrecht, G., Magnier, M., Heidinger, V., and Gabriel, D. (2008). High-content assay to study protein prenylation. J Biomol Screen 13, 456-67.

- Bakal, C., Aach, J., Church, G., and Perrimon, N. (2007). Quantitative morphological signatures define local signaling networks regulating cell morphology. Science (New York, N.Y 316, 1753-6.

- Jones, T. R., Carpenter, A. E., Lamprecht, M. R., Moffat, J., Silver, S. J., Grenier, J. K., Castoreno, A. B., Eggert, U. S., Root, D. E., Golland, P., and Sabatini, D. M. (2009). Scoring diverse cellular morphologies in image-based screens with iterative feedback and machine learning. Proceedings of the National Academy of Sciences of the United States of America 106, 1826-31.

- Kiger, A. A., Baum, B., Jones, S., Jones, M. R., Coulson, A., Echeverri, C., and Perrimon, N. (2003). A functional genomic analysis of cell morphology using RNA interference. Journal of biology 2, 27.

- Wang, J., Zhou, X., Bradley, P. L., Chang, S. F., Perrimon, N., and Wong, S. T. (2008). Cellular phenotype recognition for high-content RNA interference genome-wide screening. J Biomol Screen 13, 29-39.

- Simpson, K. J., Selfors, L. M., Bui, J., Reynolds, A., Leake, D., Khvorova, A., and Brugge, J. S. (2008). Identification of genes that regulate epithelial cell migration using an siRNA screening approach. Nature cell biology 10, 1027-38.

- Koh, E. Y., Chen, T., and Daley, G. Q. (2002). Novel retroviral vectors to facilitate expression screens in mammalian cells. Nucleic acids research 30, e142.

- Erfle, H., Neumann, B., Rogers, P., Bulkescher, J., Ellenberg, J., and Pepperkok, R. (2008). Work flow for multiplexing siRNA assays by solid-phase reverse transfection in multiwell plates. J Biomol Screen 13, 575-80.

- Zicha, D., Pokorna, E., Urbanec, P., Chaloupkova, A., and Vesely, P. (1993). Acid pH induced persistent motility in vitro of metastasising sarcoma cells. Journal of Computer Assisted Microscopy 5, 273-9.

- Carpenter, A. E., Jones, T. R., Lamprecht, M. R., Clarke, C., Kang, I. H., Friman, O., Guertin, D. A., Chang, J. H., Lindquist, R. A., Moffat, J., Golland, P., and Sabatini, D. M. (2006). CellProfiler: image analysis software for identifying and quantifying cell phenotypes. Genome Biol 7, R100.

- Jones, T. R., Kang, I. H., Wheeler, D. B., Lindquist, R. A., Papallo, A., Sabatini, D. M., Golland, P., and Carpenter, A. E. (2008). CellProfiler Analyst: data exploration and analysis software for complex image-based screens. BMC Bioinformatics 9, 482.

- Benjamini, Y., Drai, D., Elmer, G., Kafkafi, N., and Golani, I. (2001). Controlling the false discovery rate in behavior genetics research. Behav Brain Res 125, 279-84.

- Foo, S. S., Turner, C. J., Adams, S., Compagni, A., Aubyn, D., Kogata, N., Lindblom, P., Shani, M., Zicha, D., and Adams, R. H. (2006). Ephrin-B2 controls cell motility and adhesion during blood-vessel-wall assembly. Cell 124, 161-73. 9 of 10