Under the microscope: Mitigating risks in manufacturing processes

Posted: 18 February 2020 | Andrew Anderson (ACD/Labs) | No comments yet

In the light of recent media coverage about product recalls – particularly resulting from the detection and presence of NDMA – this article outlines ways to avoid the wide-ranging negative impacts on patients, pharmacies, manufacturers, pharmaceutical companies and health authorities and offers ways to mitigate the risk of future recalls.

In today’s global pharmaceutical supply chains, what are some of the risks of impurity-based recalls?

While there is a variety of risk factors, some recently disclosed product recalls highlight a number of factors that are especially important for stakeholders to consider.

Highly distributed supply chains certainly reduce the cost of product manufacturing and distribution. Additionally, utilising external contract manufacturers – especially those whose core capabilities include unique synthesis and material capabilities – enables more effective utilisation of innovative manufacturing assets.

However, there are three risk points that must be effectively managed by stakeholders. First, effective capture and review of batch record summary data is critical to assuring product quality. Consider the impact of operating conditions and the need for assurance that those conditions conform to market authorised procedures. Solvent switching by a CMO, for example, is a problem we have seen in the media over the last few years.1 What may seem an innocuous non-conforming process change (ie, use of an alternate reaction solvent – dimethylformamide [DMF]) very early in the manufacturing process, can lead to devastating consequences as have been witnessed since late 2018. A seemingly low-probability risk can have a significant impact on drug product quality, particularly in terms of the drug product’s impurity profile. There are many cases where specific impurities observed in raw materials or early intermediates are purged through downstream purification. However, if the process-dependent purge factors for impurities are not quantified, stakeholders are exposed to the risk of final drug product contamination, as seen with the sartan recall.

How does the current paradigm contribute to risk?

Particularly for raw material procurement and early key intermediate contract manufacturing, sponsor pharmaceutical companies often rely on their external ecosystem to maintain appropriate quality procedures. While regulatory bodies worldwide mandate submission of quality summaries under a drug master file and conduct extensive site/procedure audits, there are times when the sponsor firms may not review (or have access to) all pertinent data necessary to identify a risk. This data is usually derived from chemical and analytical data that is collected as “proof” and abstracted to textual and numerical information for facile distribution.

Additionally, process execution information and quality assurance test results are exported into human-readable (but very lengthy) summaries. Human quality assurance staff within both the sponsor firms and health authorities must review these summary documents. The lack of a digital system to allow for automated comparison of batch records and test results to market-authorised procedure parameters and product specifications presents significant quality risks.

How can these risks be addressed?

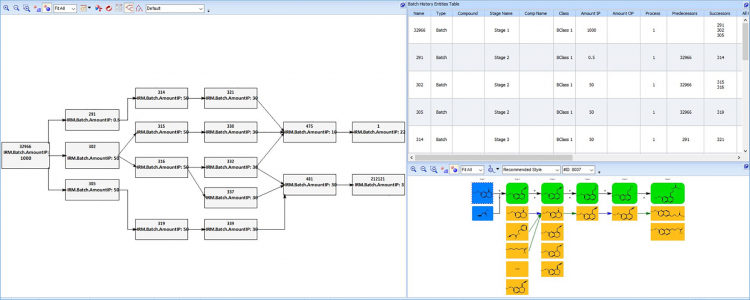

Digital systems would allow for a contextually relevant batch review. Specifically, comparison of batch datasets for the same materials (but produced at different times or at different manufacturing sites) allows for facile detection of quality outliers.

Furthermore, comparison of batch datasets across the finished product material’s genealogy can:

- allow stakeholders to pinpoint the originating source of quality issues for specific batches

- allow ‘leading’ quality trends within a material supplier network to be observed – permitting identification of risk early in the manufacturing process.

Speaking of analytical data, do you feel the value of analytical data is overlooked?

Certainly, purveyors of analytical data would agree that reviewing live analytical data is critical to intensive problem-solving challenges. However, some of the historical challenges of representing analytical experimentation results in a digital fashion – without significant data reduction/abstraction – has prevented the proliferation and inclusion of analytical data within quality stakeholders’ decision support systems.

What should stakeholders in this supply ecosystem do moving forward?

Our first recommendation is to establish digital analytical data exchange between contract manufacturers, contract analysers, pharmaceutical sponsors and regulatory authorities. While the effort to establish the exchange mechanism is no small effort, ACD/Labs has worked with a variety of customers to enable this. This first recommendation should be extended to include careful assessment of the variety of formats, detector channels, processing methodologies and any validation-relevant metadata.

Our second recommendation is to establish digital batch record exchange, similar to the data exchange mechanism described above, but associate those exchanged datasets to all batch record information across a product’s genealogy.

Certainly, some trade secret information may be limited between stakeholders within a supply chain. However, these limitations should not put overall quality at risk; so careful deliberation on the release of potentially confidential information should be conducted. As a minimum, incorporation of machine-readable batch operating parameters within drug master file submissions should be considered.

Implementing these two recommendations allows stakeholders on-demand access to data from raw material to finished product, allowing for fully resolved quality traceability through the supply chain, even when work is outsourced to many partners across a global supply chain.

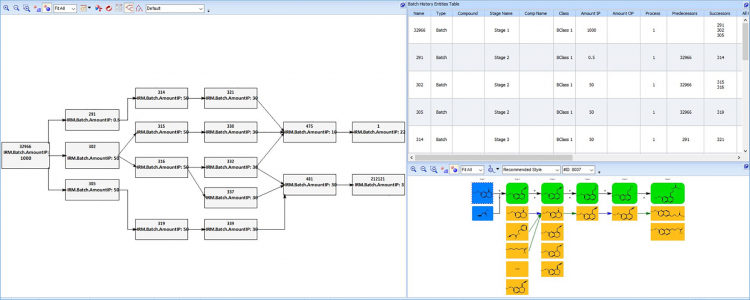

Our third and final recommendation is to extend these first two recommendations upstream into the product development lifecycle. By marrying supply chain datasets to their predecessors within research and development, labs can help stakeholders with critical productivity and risk mitigating insights. Imagine the effort when a new impurity is observed in a production batch of drug product or drug substance prior to market release. This traditional approach of determining whether this “new” impurity is truly “new,” or just not known because it was only observed during clinical processes development efforts, requires extensive human effort to scour the various data systems in which they are stored. At worst, isolation and elucidation efforts by supporting scientists can be costly and time consuming. Implementing the recommendations outlined above enables a relatively fast decision support query across chromatographic and spectral data – one of the truly unique capabilities of the ACD/Labs’ Spectrus Platform3 and, specifically, Luminata®.4

In summary, the extensive effort to ‘digitally transform’ businesses across their supply chains is ongoing within many industries. Within the pharmaceutical industry, these efforts should be extended to include batch record data, analytical data and process development data across their highly distributed and global supply chains.

References

1. Farrukh M, Tariq M, Malik O, Khan T. Valsartan recall: global regulatory overview and future challenges [Internet]. NCBI. 2018 [cited 18 February 2020]. Available from: http://www.ncbi.nlm.nih.gov/pmc/articles/PMC6351967/

2. Supported Data Formats for ACD/Labs Software [Internet]. Acdlabs.com. 2020. Available from: https://www.acdlabs.com/products/fileformats/index.php

3. Collect, Analyze, Interpret, and Unify Chemical, Structural, and Analytical Data in a Live Searchable Environment | ACD/Spectrus Platform [Internet]. Acdlabs.com. 2020. Available from: https://www.acdlabs.com/products/spectrus/index.php

4. Software for CMC Product Development | ACD/Labs Luminata [Internet]. Acdlabs.com. 2020. Available from: https://www.acdlabs.com/solutions/luminata/index.php